Unveiling the Magic Behind Augumenta’s AR accuracy: Camera Calibration in Smartglasses

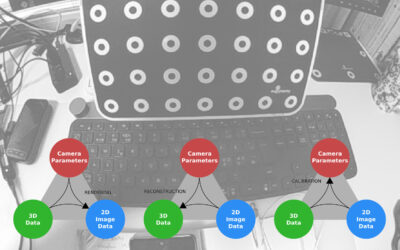

Introducing camera calibration and its importance in high-accuracy augmented reality

In the field, at an expo or with customers? Trying to estimate the field of view of your smartglasses? Here's computer vision engineer's trick just for you...

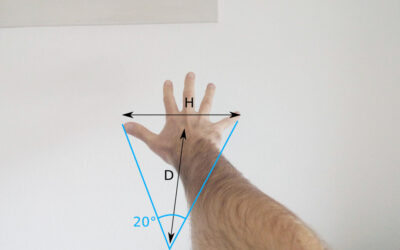

While a good geometric camera calibration procedure will give you accurate results, it can be hard to quickly estimate the field of view (FOV) of smartglasses displays and cameras while in the field. So here is a simple (if a little dirty) way to give you an approximate estimation:

You could also use the width of your fist which is roughly 10 degrees but it won’t be very practical unless you’re testing old smartglasses with narrow field of view.

This approximation is well known from astronomers and holds well across ages and body sizes because a longer arm is roughly compensated by a larger hand. However, the angle can vary from 15 to 25 degrees depending on the source. Therefore, I recommend that you obtain a more accurate measurement for yourself by using this simple formula:

angle = atan(0.5 * H / D) * 360 / π

where H is the distance between the extremities of your thumb and little finger and D is the distance between your face and your hand (the length of your arm). For me the ‘magic’ angle is 17.5 degrees.

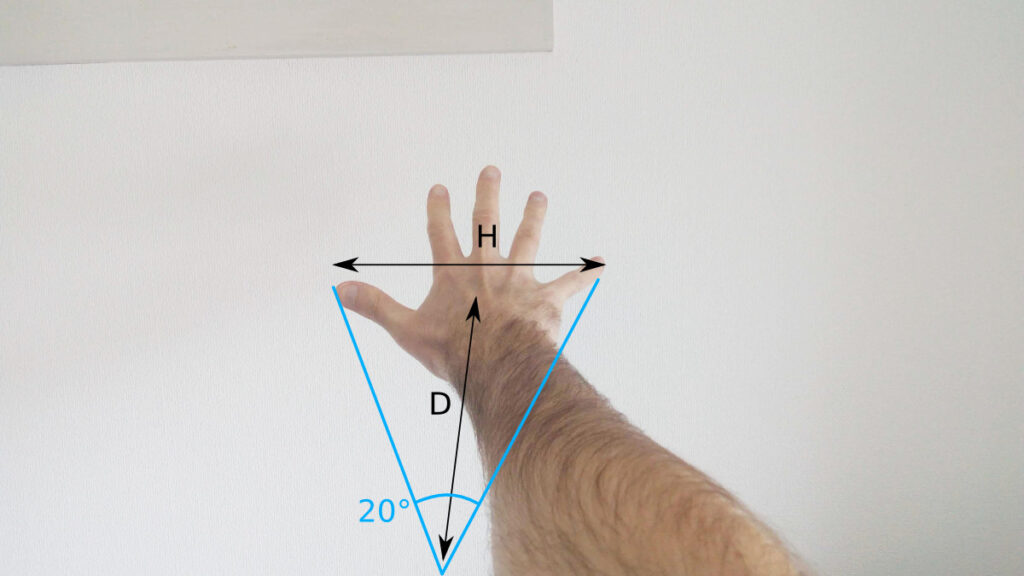

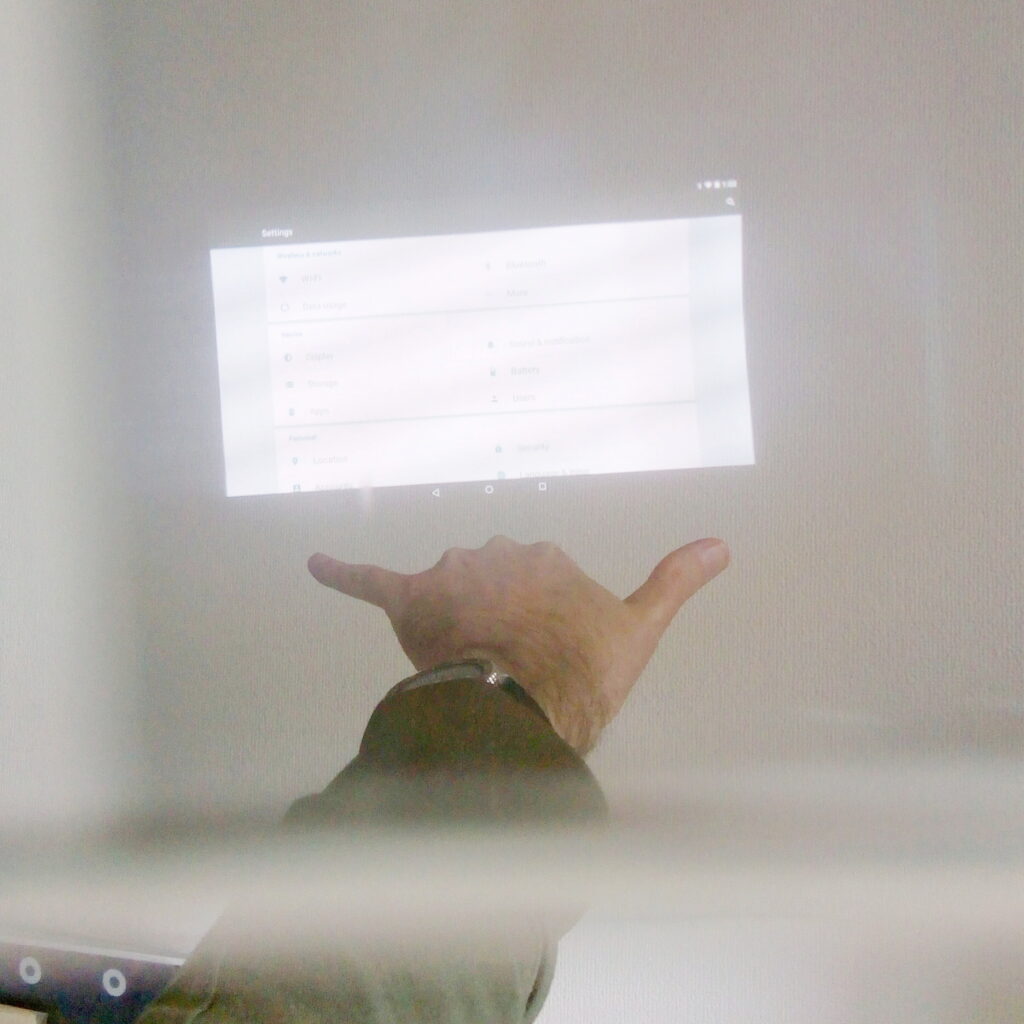

I took the image above with my phone which is (of course!) precisely calibrated: its horizontal field of view is 63 degrees. Using our quick and dirty technique we can see in the picture below that my hand fits the frame about 4 times (minus 1/4 for the last copy):

We can conclude that the horizontal FOV is about (4 – ¼) * 17.5 = 66 degrees. Pretty close to the calibrated value of 63! This also works with non transparent displays like the ones found on many monocular glasses (Vuzix, Iristick, Realwear,…). For example the display of the Realwear HMT1 is almost exactly one ‘hand’ for me, hence a field of view of 17.5 degrees. Did I just use ‘hand’ as an angular unit? 🙂 The same measurement on the Iristick G2 gives us two thirds of a hand, or 12 degrees.

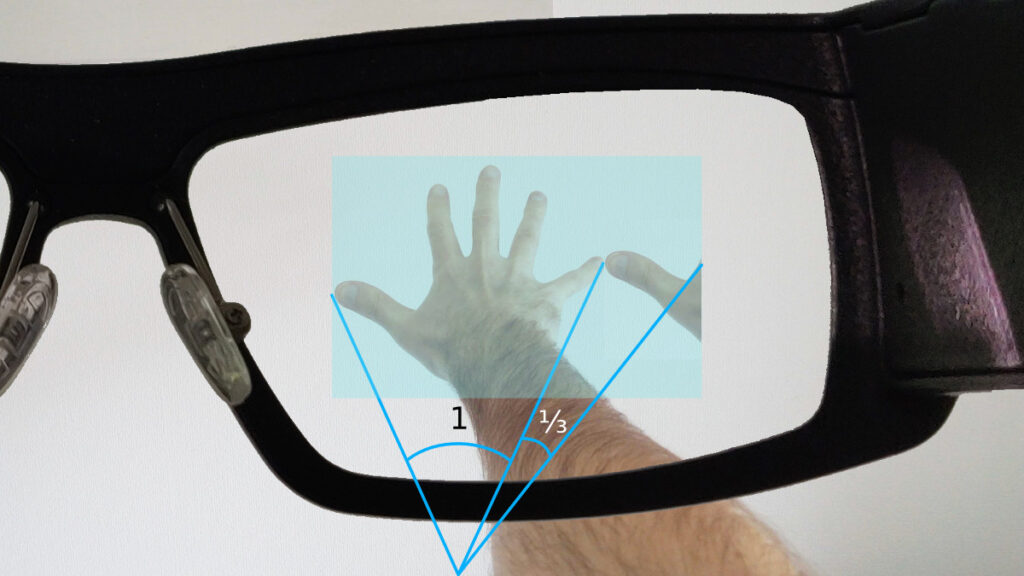

Here’s a composite image of the idea with an active display area (in light blue) that is 1 ⅓ times the width of my hand, and thus about 23 degrees wide:

We can also work on a real life example. It’s quite difficult to get a meaningful ‘screenshot’ through AR glasses so the quality is not stellar. Still we can use what we’ve learned to estimate the field of view of my good old BT-350:

We would need about 1 + ¼ times my hand to cover the field of view of the display, hence 1.25 * 17.5 = 22 degrees. The official specs from Epson say 23 degrees of diagonal FOV, which at 16:9 means about 20 degrees of horizontal FOV. Thus a 2 degrees (10%) difference which can be easily attributed to the strange setup required to take this kind of picture. You can try for yourself to put your hand in front of you as explained here and you will quickly see how restrictive this FOV is for augmented reality…

We have found this simple approach to be very effective when discussing smartglasses options with our customers as it allows them to quickly understand why the field of view is one of the most critical optical characteristics of glasses (together with the eye box, maybe a good subject for another post…)

Have you encountered challenges in explaining technical concepts related to smartglasses? We’d love to hear your thoughts and experiences!

Introducing camera calibration and its importance in high-accuracy augmented reality

The eye box is the secret behind good AR UX. Discover what it is and how to take full advantage of this hidden smartglasses spec

How hundreds of millions can be saved every year by large logistics operations thanks to smartglasses and SmartMarkers.

How millions can be saved every year by introducing SmartPanel and smartglasses to reduce airframe weight, shorten idle time and shrink ground crews.